Basically what i'm seeing is the GC will kick in - then immediately after it's finished, a ton of new objects get instanciated and heap memory usage climbs right back up. Althogh I'm stumped as to the actual root cause (if it's the embedded jre, jre version, a plugin, one of the new patches for jtattoo, etc). I've attached jprofiler to my spark running on my system, and I can see that after a while of runtime, the GC seems to get overwhelmed with excessive object instantiations. Only solution I've found so far is to have users exit then re-open spark after a few days. Memory usage measured via task manager on my system shows spark consuming almost 400MB of ram - I've tried setting a script on the openfire server to shutdown openfire for a period of time, forcing all attached clients to be DC'd and have to reconnect, but same thing still happens. Watching this a few weeks, it seems that once Spark passes the 55+ hour mark of continuous runtime, then things start to get a little weird. Upon checking it out for a while, I eventually discovered that if I could get task manager to open (some systems slowed so bad, only could restart them) - and killed the Spark process, then the system immediately returned to normal. but then after a few days, users started to complain their system had suddenly become unresponsive - typing would have the letters show up one at a time slowly in whatever program you use such as notepad, system sluggish to respond to clicks, etc.

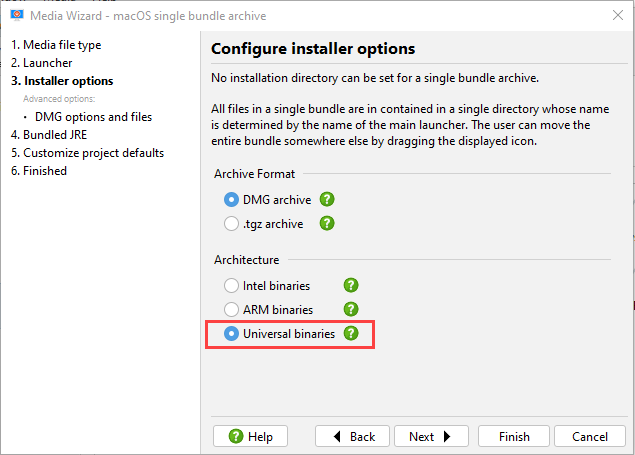

I rolled this out to most of the office after testing it a few days on my system. Spark 2.7.0 (From TRUNK on june 27th) - customized with internal company branding + SPARK-1515 + SPARK-1538 - built with system's oracle jdk 1.7.0_25 and embedded with jre 1.7.0_21 via install4j (i've also tried embedding 1.7.0_25) - plugins: Window Flashing, OTR, Roar, Spellcheck

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed